Difference between revisions of "GTC SC11"

(→Performance Results) |

(→Performance Results) |

||

| Line 70: | Line 70: | ||

| − | [[Image:gtc_trace.png| | + | [[Image:gtc_trace.png|750px]] |

| + | |||

| + | This show a trace of a single execution on one MPI process (Multiple nodes/gpus can be utilized as well, performance behavior is similar across each process). | ||

Revision as of 00:17, 29 November 2011

Background

In the fall of 2011, several performance studies were conducted on a port of the GTC application of GPUs (full paper).

Experiment setup

[ http://keeneland.gatech.edu/ Keeneland ] was chossen at the site for running these experiments, and is accessible to the developers as well.

The GNU compilers were chosen so as to minimize in conflict with CUDArt with does not support other compilers.

Here is the Makefile used:

# Define the following to 1 to enable build

BENCH_GTC_MPI = 1

BENCH_CHARGEI_PTHREADS = 0

BENCH_PUSHI_PTHREADS = 0

BENCH_SERIAL = 0

SDK_HOME = /nics/c/home/biersdor/NVIDIA_GPU_Computing_SDK/

CUDA_HOME = /sw/keeneland/cuda/4.0/linux_binary

NVCC_HOME = $(CUDA_HOME)

TAU_MAKEFILE=/nics/c/home/biersdor/tau2/x86_64/lib/Makefile.tau-cupti-mpi-pdt-openmp-opari

TAU_OPTIONS='-optPdtCOpts=-DPDT_PARSE -optVerbose -optShared -optTauSelectFile=select.tau'

TAU_FLAGS=-tau_makefile=$(TAU_MAKEFILE) -tau_options=$(TAU_OPTIONS)

CC = tau_cc.sh $(TAU_FLAGS)

MPICC = tau_cc.sh $(TAU_FLAGS)

NVCC = nvcc

NVCC_FLAGS = -gencode=arch=compute_20,code=\"sm_20,compute_20\" -gencode=arch=compute_20,code=\"sm_20,compute_20\" -m64 --compiler-options '-finstrument-functions -fno-strict-aliasing' -I$(NVCC_HOME)/include -I. -DUNIX -O3 -DGPU_ACCEL=1 -I./ -I$(SDK_HOME)/C/common/inc -I$(SDK_HOME)/shared/inc

NVCC_LINK_FLAGS = -fPIC -m64 -L$(NVCC_HOME)/lib64 -L$(SDK_HOME)/shared/lib -L$(SDK_HOME)/C/lib -L$(SDK_HOME)/C/common/lib/linux -lcudart -lstdc++

CFLAGS = -DUSE_MPI=1 -DGPU_ACCEL=1

CFLAGSOMP = -fopenmp

COPTFLAGS = -std=c99

#CFLAGSOMP = -mp=bind

#COPTFLAGS = -fast

CDEPFLAGS = -MD

CLDFLAGS = -limf $(NVCC_LINK_FLAGS)

MPIDIR =

CFLAGS += -I$(CUDA_HOME)/include/

EXEEXT = _keeneland_opt_gnu_tau_pdt

AR = ar

ARCRFLAGS = cr

RANLIB = ranlib

PDT was chosen to allow for event filtering here is the select file used:

BEGIN_EXCLUDE_LIST double RngStream_RandU01(RngStream) double U01(RngStream) END_EXCLUDE_LIST

Performance Results

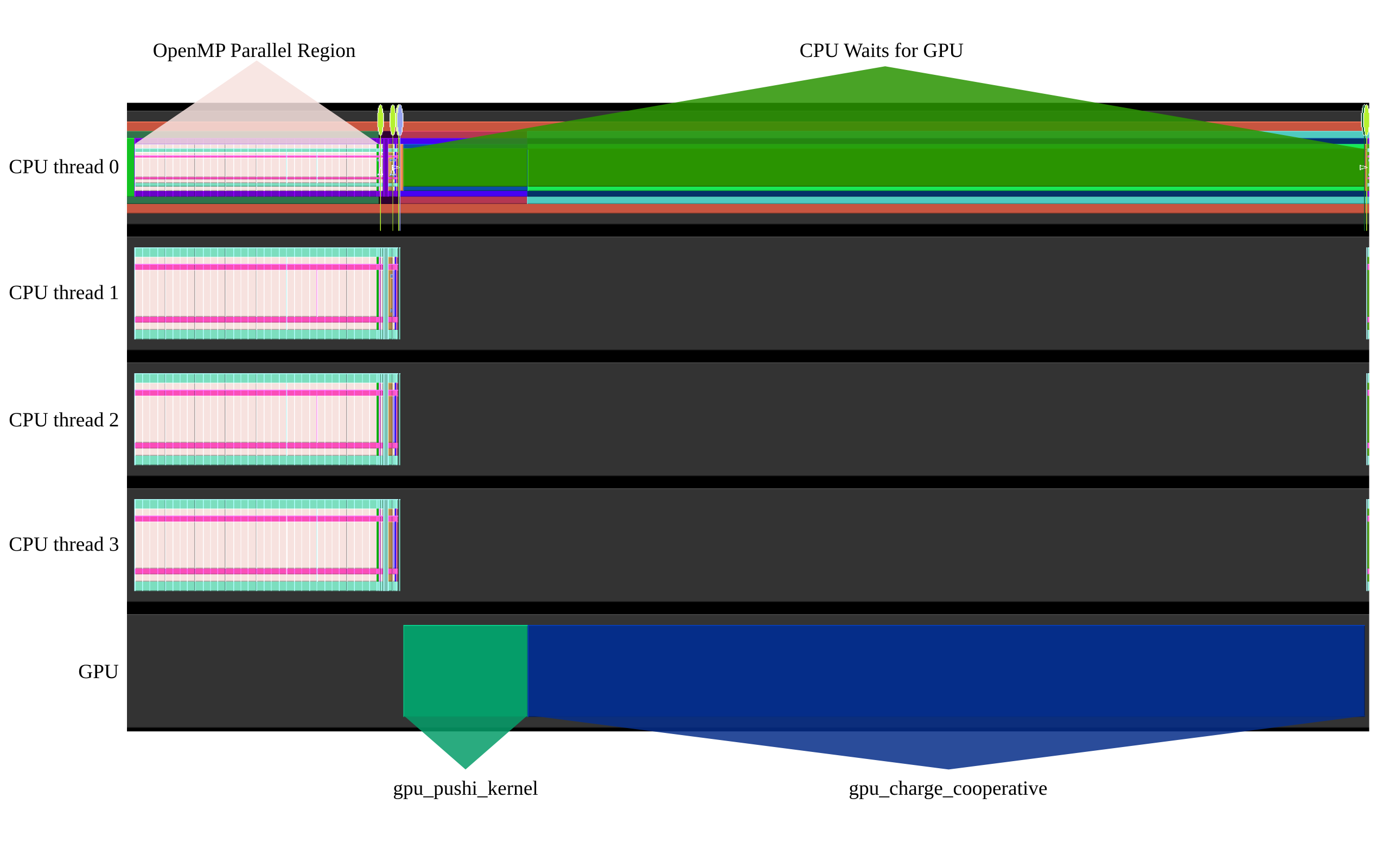

Here are some performance results that show the overall execution model:

This show a trace of a single execution on one MPI process (Multiple nodes/gpus can be utilized as well, performance behavior is similar across each process).