Difference between revisions of "Guide:Opteron NUMA Analysis"

| (13 intermediate revisions by the same user not shown) | |||

| Line 14: | Line 14: | ||

important, therefore, to ensure that memory accesses are made to local RAM as | important, therefore, to ensure that memory accesses are made to local RAM as | ||

often possible. | often possible. | ||

| − | |||

| − | |||

== Code == | == Code == | ||

| Line 132: | Line 130: | ||

3: time = 7.97128 seconds | 3: time = 7.97128 seconds | ||

</pre> | </pre> | ||

| + | |||

| + | CPU's 0 and 1 are the two cores of the first processor, and CPU's 2 and 3 are the two cores of the second processor. We clearly see that when the memory is allocated from the other CPU the time for the memory test increases from about 8 seconds to about 10.3 seconds. | ||

== Instrumentation == | == Instrumentation == | ||

| − | Now, we add some (manual) instrumentation using [[Phase_Profiling|phases] and [[Dynamic_Timers|dynamic timers]]. | + | Now, we add some (manual) instrumentation using [[Phase_Profiling|phases]] and [[Dynamic_Timers|dynamic timers]]. |

#include <sched.h> | #include <sched.h> | ||

| Line 220: | Line 220: | ||

} | } | ||

| − | == Profile == | + | == Profile Results== |

| − | Time: | + | Wallclock Time: |

<pre> | <pre> | ||

NODE 0;CONTEXT 0;THREAD 0: | NODE 0;CONTEXT 0;THREAD 0: | ||

| Line 301: | Line 301: | ||

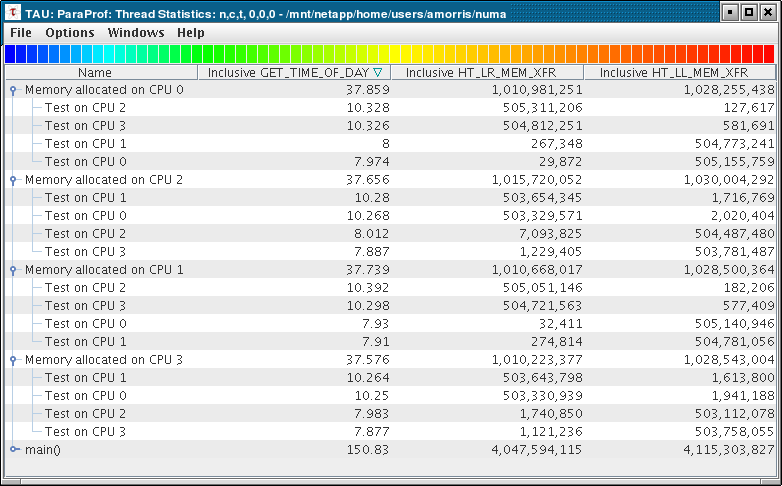

[[Image:numa.png]] | [[Image:numa.png]] | ||

| + | |||

| + | We see that when accessing remote memory, the HT_LR_MEM_XFR counter tracks the transfer. Likewise the HT_LL_MEM_XFR counter tracks the Local-to-Local transfers. Here, there are about 500 million transfers, and in each case we see how many are local and how many are remote. Using these metrics you can determine if you program is making good use of data locality in an AMD Opteron NUMA system. | ||

| + | |||

| + | == Profile Data == | ||

| + | |||

| + | [[Media:numa.ppk|Packed Profile]] | ||

| + | |||

| + | [http://proton.nic.uoregon.edu/javaws/paraprof-numa.jnlp Java Web Start link] | ||

Latest revision as of 15:22, 12 March 2007

Introduction

NUMA (Non-Uniform Memory Access) machines are widespread in the HPC community. Explanations of NUMA lie elswhere on the web. Here we will examine performance analysis of the AMD Opteron NUMA architecture.

Details on the AMD Opteron NUMA architecture are given here:

http://developer.amd.com/article_print.jsp?id=18

http://developer.amd.com/articlex.jsp?id=30

Each Opteron CPU (not core) has its own memory controller and access to local RAM. To access other sockets' RAM, it must use the HyperTransport bus. It is important, therefore, to ensure that memory accesses are made to local RAM as often possible.

Code

Following is a short C++ program to test NUMA speeds.

#include <sched.h>

#include <stdio.h>

#include <stdlib.h>

#include <sys/time.h>

#include <string.h>

#define MEM_MB 512

#define MEM_SIZE MEM_MB*1024L*1024L

#define ITER 40

double getTime() {

struct timeval tp;

static double last_timestamp = 0.0;

double timestamp;

gettimeofday (&tp, 0);

timestamp = (double) tp.tv_sec * 1e6 + tp.tv_usec;

return timestamp;

}

int getNumCPU() {

cpu_set_t mask;

if (sched_getaffinity(0,sizeof(cpu_set_t),&mask)) {

fprintf (stderr, "Unable to retrieve affinity\n");

exit(1);

}

int nproc = 0;

for(int i=0; i<CPU_SETSIZE; i++) {

if( CPU_ISSET(i,&mask) ) {

nproc++;

}

}

return nproc;

}

void memtest(char *ptr) {

for (int i=0; i<ITER; i++) {

memcpy(ptr, ptr+(MEM_SIZE/2),MEM_SIZE/2);

}

}

void setCPU(int cpu) {

cpu_set_t mask;

CPU_ZERO(&mask);

CPU_SET(cpu, &mask);

sched_setaffinity(0, sizeof(cpu_set_t), &mask);

}

void test(int cpu, int nproc) {

setCPU(cpu);

char *ptr = (char*) malloc (MEM_SIZE);

if (!ptr) {

fprintf (stderr, "failed to malloc\n");

exit(1);

}

printf ("\nMemory allocated on cpu %d\n", cpu);

// make sure it all gets paged in

for (long j=0; j<MEM_SIZE; j++) {

ptr[j] = j;

}

for (int i = 0; i < nproc; i++) {

setCPU(i);

double start = getTime();

memtest(ptr);

double end = getTime();

printf ("%d: time = %G seconds\n", i, (end - start) / (1000*1000));

}

free (ptr);

}

int main (int argc, char **argv) {

TAU_PROFILE("main()", "", TAU_DEFAULT);

TAU_PROFILE_SET_NODE(0);

int nproc = getNumCPU();

for (int i = 0; i < nproc; i++) {

test(i,nproc);

}

return 0;

}

Output

Following is the output of this program on a dual Opteron 285 (dual-core) system.

Memory allocated on cpu 0 0: time = 7.95079 seconds 1: time = 7.93437 seconds 2: time = 10.2906 seconds 3: time = 10.3224 seconds Memory allocated on cpu 1 0: time = 7.94089 seconds 1: time = 7.95811 seconds 2: time = 10.2629 seconds 3: time = 10.3479 seconds Memory allocated on cpu 2 0: time = 10.3138 seconds 1: time = 10.3115 seconds 2: time = 7.88206 seconds 3: time = 7.961 seconds Memory allocated on cpu 3 0: time = 10.3013 seconds 1: time = 10.3484 seconds 2: time = 7.9039 seconds 3: time = 7.97128 seconds

CPU's 0 and 1 are the two cores of the first processor, and CPU's 2 and 3 are the two cores of the second processor. We clearly see that when the memory is allocated from the other CPU the time for the memory test increases from about 8 seconds to about 10.3 seconds.

Instrumentation

Now, we add some (manual) instrumentation using phases and dynamic timers.

#include <sched.h> #include <stdio.h> #include <stdlib.h> #include <sys/time.h> #include <string.h> #include <TAU.h>

#define MEM_MB 512 #define MEM_SIZE MEM_MB*1024L*1024L #define ITER 40

double getTime() { struct timeval tp; static double last_timestamp = 0.0; double timestamp; gettimeofday (&tp, 0); timestamp = (double) tp.tv_sec * 1e6 + tp.tv_usec; return timestamp; }

int getNumCPU() { cpu_set_t mask; if (sched_getaffinity(0,sizeof(cpu_set_t),&mask)) { fprintf (stderr, "Unable to retrieve affinity\n"); exit(1); } int nproc = 0; for(int i=0; i<CPU_SETSIZE; i++) { if( CPU_ISSET(i,&mask) ) { nproc++; } } return nproc; }

void memtest(char *ptr) { for (int i=0; i<ITER; i++) { memcpy(ptr, ptr+(MEM_SIZE/2),MEM_SIZE/2); } }

void setCPU(int cpu) { cpu_set_t mask; CPU_ZERO(&mask); CPU_SET(cpu, &mask); sched_setaffinity(0, sizeof(cpu_set_t), &mask); }

void test(int cpu, int nproc) { setCPU(cpu); char *ptr = (char*) malloc (MEM_SIZE); if (!ptr) { fprintf (stderr, "failed to malloc\n"); exit(1); } printf ("\nMemory allocated on cpu %d\n", cpu); // make sure it all gets paged in for (long j=0; j<MEM_SIZE; j++) { ptr[j] = j; } for (int i = 0; i < nproc; i++) { char buf[128]; sprintf (buf, "Test on CPU %d", i); TAU_PROFILE_TIMER_DYNAMIC(timer, buf, "", TAU_USER); setCPU(i); double start = getTime(); TAU_PROFILE_START(timer); memtest(ptr); TAU_PROFILE_STOP(timer); double end = getTime(); printf ("%d: time = %G seconds\n", i, (end - start) / (1000*1000)); } free (ptr); }

int main (int argc, char **argv) { TAU_PROFILE("main()", "", TAU_DEFAULT); TAU_PROFILE_SET_NODE(0); int nproc = getNumCPU(); for (int i = 0; i < nproc; i++) { char buf[128]; sprintf (buf, "Memory allocated on CPU %d", i); TAU_PHASE_CREATE_DYNAMIC(phase, buf, "", TAU_USER); TAU_PHASE_START(phase); test(i,nproc); TAU_PHASE_STOP(phase); } return 0; }

Profile Results

Wallclock Time:

NODE 0;CONTEXT 0;THREAD 0:

---------------------------------------------------------------------------------------

%Time Exclusive Inclusive #Call #Subrs Inclusive Name

msec total msec usec/call

---------------------------------------------------------------------------------------

100.0 0.134 2:30.830 1 4 150830250 main()

25.1 1,231 37,858 1 4 37858640 Memory allocated on CPU 0

25.1 1,231 37,858 1 4 37858640 main() => Memory allocated on CPU 0

25.0 1,208 37,738 1 4 37738973 Memory allocated on CPU 1

25.0 1,208 37,738 1 4 37738973 main() => Memory allocated on CPU 1

25.0 1,210 37,656 1 4 37656354 Memory allocated on CPU 2

25.0 1,210 37,656 1 4 37656354 main() => Memory allocated on CPU 2

24.9 1,202 37,576 1 4 37576149 Memory allocated on CPU 3

24.9 1,202 37,576 1 4 37576149 main() => Memory allocated on CPU 3

24.3 36,714 36,714 4 0 9178556 Test on CPU 2

24.2 36,453 36,453 4 0 9113340 Test on CPU 1

24.1 36,422 36,422 4 0 9105516 Test on CPU 0

24.1 36,387 36,387 4 0 9096986 Test on CPU 3

6.9 10,391 10,391 1 0 10391755 Memory allocated on CPU 1 => Test on CPU 2

6.8 10,328 10,328 1 0 10328079 Memory allocated on CPU 0 => Test on CPU 2

6.8 10,325 10,325 1 0 10325531 Memory allocated on CPU 0 => Test on CPU 3

6.8 10,298 10,298 1 0 10298334 Memory allocated on CPU 1 => Test on CPU 3

6.8 10,279 10,279 1 0 10279634 Memory allocated on CPU 2 => Test on CPU 1

6.8 10,267 10,267 1 0 10267595 Memory allocated on CPU 2 => Test on CPU 0

6.8 10,263 10,263 1 0 10263815 Memory allocated on CPU 3 => Test on CPU 1

6.8 10,250 10,250 1 0 10250194 Memory allocated on CPU 3 => Test on CPU 0

5.3 8,011 8,011 1 0 8011762 Memory allocated on CPU 2 => Test on CPU 2

5.3 7,999 7,999 1 0 7999613 Memory allocated on CPU 0 => Test on CPU 1

5.3 7,982 7,982 1 0 7982628 Memory allocated on CPU 3 => Test on CPU 2

5.3 7,974 7,974 1 0 7974093 Memory allocated on CPU 0 => Test on CPU 0

5.3 7,930 7,930 1 0 7930183 Memory allocated on CPU 1 => Test on CPU 0

5.2 7,910 7,910 1 0 7910297 Memory allocated on CPU 1 => Test on CPU 1

5.2 7,887 7,887 1 0 7887206 Memory allocated on CPU 2 => Test on CPU 3

5.2 7,876 7,876 1 0 7876873 Memory allocated on CPU 3 => Test on CPU 3

HyperTransport data transfer from local memory to remote memory (PAPI_NATIVE_HT_LR_MEM_XFR):

NODE 0;CONTEXT 0;THREAD 0:

---------------------------------------------------------------------------------------

%Counts Exclusive Inclusive #Call #Subrs Count/Call Name

counts total counts

---------------------------------------------------------------------------------------

25.2 1.019E+09 1.019E+09 4 0 254799257 Test on CPU 2

25.0 1.012E+09 1.012E+09 4 0 252971114 Test on CPU 3

24.9 1.008E+09 1.008E+09 4 0 251960076 Test on CPU 1

24.9 1.007E+09 1.007E+09 4 0 251680698 Test on CPU 0

12.5 5.053E+08 5.053E+08 1 0 505311206 Memory allocated on CPU 0 => Test on CPU 2

12.5 5.051E+08 5.051E+08 1 0 505051146 Memory allocated on CPU 1 => Test on CPU 2

12.5 5.048E+08 5.048E+08 1 0 504812251 Memory allocated on CPU 0 => Test on CPU 3

12.5 5.047E+08 5.047E+08 1 0 504721563 Memory allocated on CPU 1 => Test on CPU 3

12.4 5.037E+08 5.037E+08 1 0 503654345 Memory allocated on CPU 2 => Test on CPU 1

12.4 5.036E+08 5.036E+08 1 0 503643798 Memory allocated on CPU 3 => Test on CPU 1

12.4 5.033E+08 5.033E+08 1 0 503330939 Memory allocated on CPU 3 => Test on CPU 0

12.4 5.033E+08 5.033E+08 1 0 503329571 Memory allocated on CPU 2 => Test on CPU 0

0.2 7.094E+06 7.094E+06 1 0 7093825 Memory allocated on CPU 2 => Test on CPU 2

0.0 1.741E+06 1.741E+06 1 0 1740850 Memory allocated on CPU 3 => Test on CPU 2

0.0 1.229E+06 1.229E+06 1 0 1229405 Memory allocated on CPU 2 => Test on CPU 3

0.0 1.121E+06 1.121E+06 1 0 1121236 Memory allocated on CPU 3 => Test on CPU 3

25.0 5.881E+05 1.011E+09 1 4 1010668017 Memory allocated on CPU 1

25.0 5.881E+05 1.011E+09 1 4 1010668017 main() => Memory allocated on CPU 1

25.0 5.606E+05 1.011E+09 1 4 1010981251 Memory allocated on CPU 0

25.0 5.606E+05 1.011E+09 1 4 1010981251 main() => Memory allocated on CPU 0

25.1 4.129E+05 1.016E+09 1 4 1015720052 Memory allocated on CPU 2

25.1 4.129E+05 1.016E+09 1 4 1015720052 main() => Memory allocated on CPU 2

25.0 3.866E+05 1.01E+09 1 4 1010223377 Memory allocated on CPU 3

25.0 3.866E+05 1.01E+09 1 4 1010223377 main() => Memory allocated on CPU 3

0.0 2.748E+05 2.748E+05 1 0 274814 Memory allocated on CPU 1 => Test on CPU 1

0.0 2.673E+05 2.673E+05 1 0 267348 Memory allocated on CPU 0 => Test on CPU 1

0.0 3.241E+04 3.241E+04 1 0 32411 Memory allocated on CPU 1 => Test on CPU 0

0.0 2.987E+04 2.987E+04 1 0 29872 Memory allocated on CPU 0 => Test on CPU 0

100.0 1418 4.048E+09 1 4 4047594115 main()

Statistics Table display from ParaProf:

We see that when accessing remote memory, the HT_LR_MEM_XFR counter tracks the transfer. Likewise the HT_LL_MEM_XFR counter tracks the Local-to-Local transfers. Here, there are about 500 million transfers, and in each case we see how many are local and how many are remote. Using these metrics you can determine if you program is making good use of data locality in an AMD Opteron NUMA system.