Difference between revisions of "MPAS-Ocean"

(→Scaling behavior) |

(→Overview) |

||

| Line 8: | Line 8: | ||

[http://mpas.sourceforge.net MPAS-Ocean Sourceforge Page] | [http://mpas.sourceforge.net MPAS-Ocean Sourceforge Page] | ||

| − | + | For the measurements below, the MPAS-Ocean code has been modified to use TAU as the timers, rather than the internal timers. This provides for both MPI performance measurement as well as PAPI counters. | |

The MPAS-Ocean developers have collected profiles on Hopper, with 192 to 16800 processes, using MPI only (no OpenMP yet). In addition, full callpath and communication matrix profiles with 128 processes on Hopper have been collected. | The MPAS-Ocean developers have collected profiles on Hopper, with 192 to 16800 processes, using MPI only (no OpenMP yet). In addition, full callpath and communication matrix profiles with 128 processes on Hopper have been collected. | ||

Latest revision as of 23:05, 2 November 2012

Contents

Overview

This is the TAU profiling MPAS-Ocean page.

For the measurements below, the MPAS-Ocean code has been modified to use TAU as the timers, rather than the internal timers. This provides for both MPI performance measurement as well as PAPI counters.

The MPAS-Ocean developers have collected profiles on Hopper, with 192 to 16800 processes, using MPI only (no OpenMP yet). In addition, full callpath and communication matrix profiles with 128 processes on Hopper have been collected.

Those profiles are available here: ParaProf, PerfExplorer. The client applications can only connect to the performance database from specific domains, and with authenticated access. Please contact the TAU team to request access to the raw data.

Below is a brief analysis of the application performance.

Performance Analysis

Scaling behavior

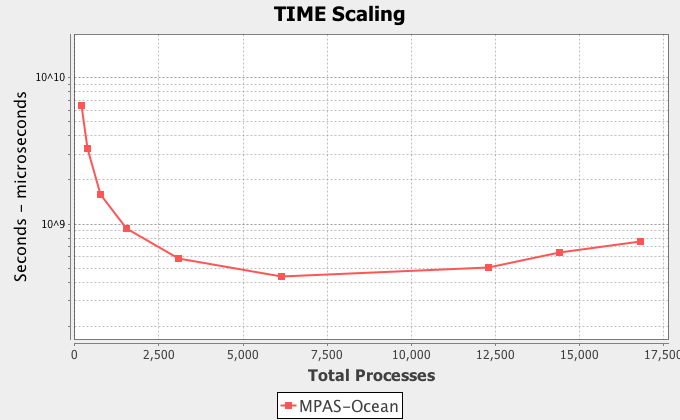

As mentioned before, the application was executed with 192 through 16800 processes in a strong scaling study (the total problem size did not change). The application shows poor scaling over 6144 processes, when communication dominates. This problem size might be too small for the larger processor counts?

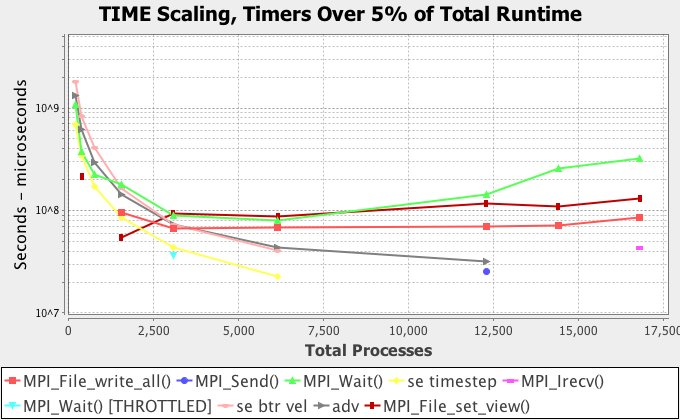

Broken down by timed regions, the scaling behavior is this (after 12k processes, only MPI routines are more than 5% of the total runtime):

Clearly, MPI_Wait is overly dominant, and as we shall see in the per-trial analysis, varies considerably across processes.

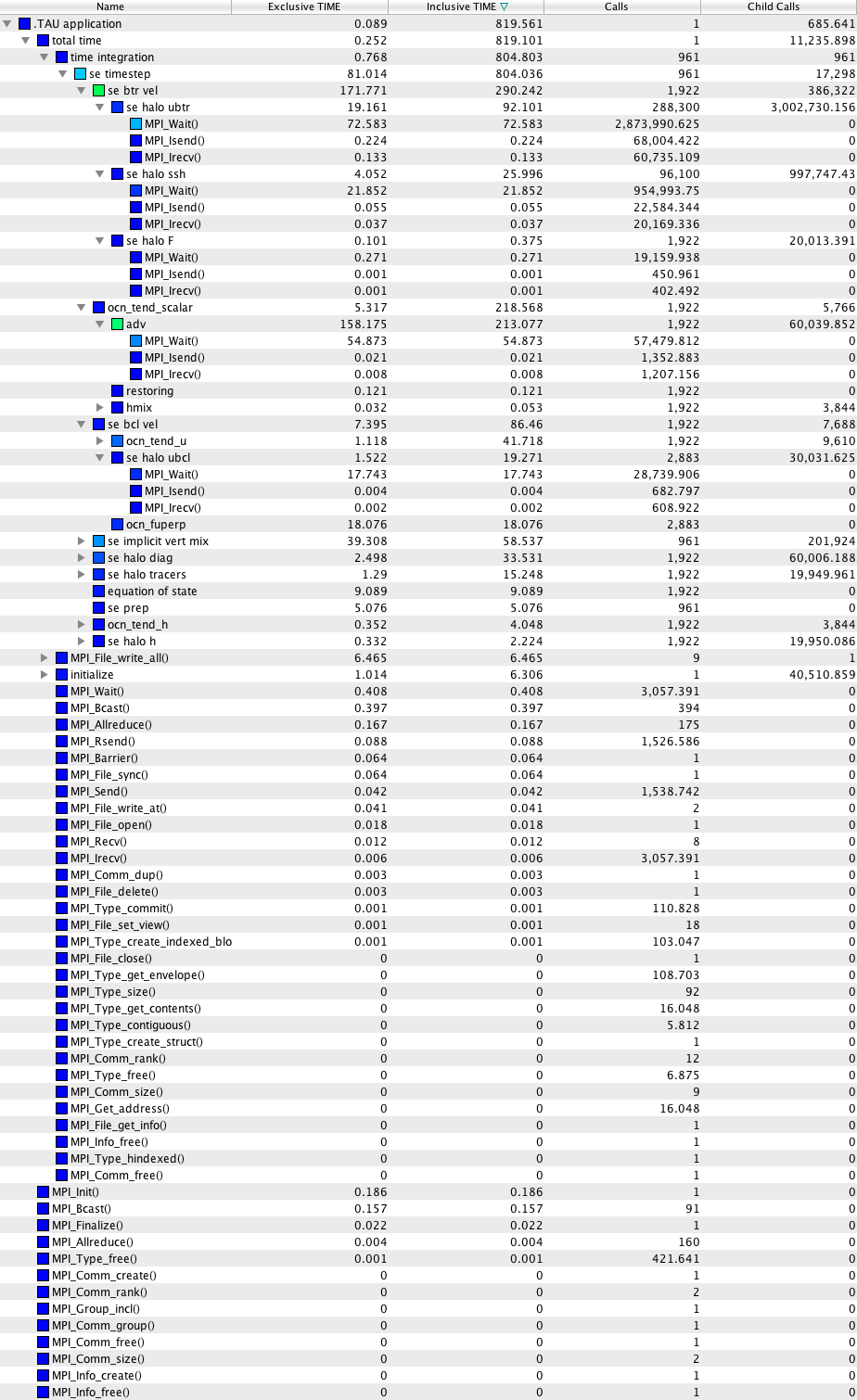

Detailed analysis of 128 process callpath profile

Detailed analysis of 128 process flat profile with communication matrix

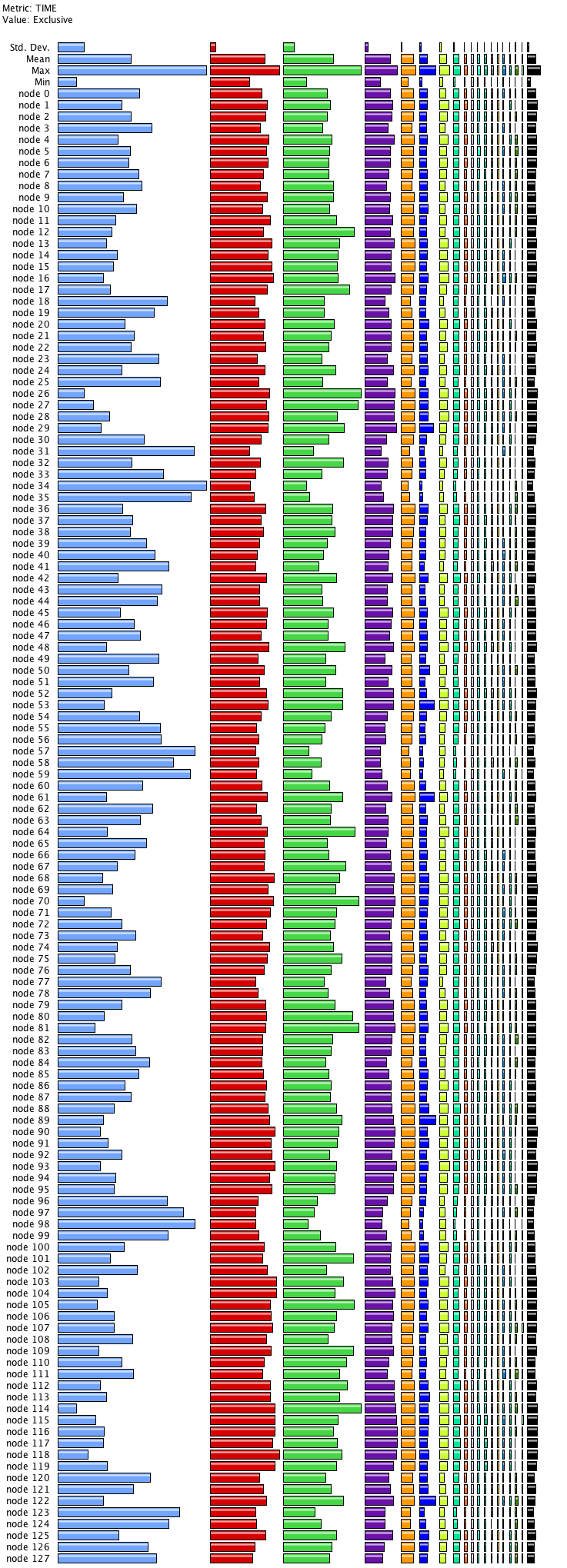

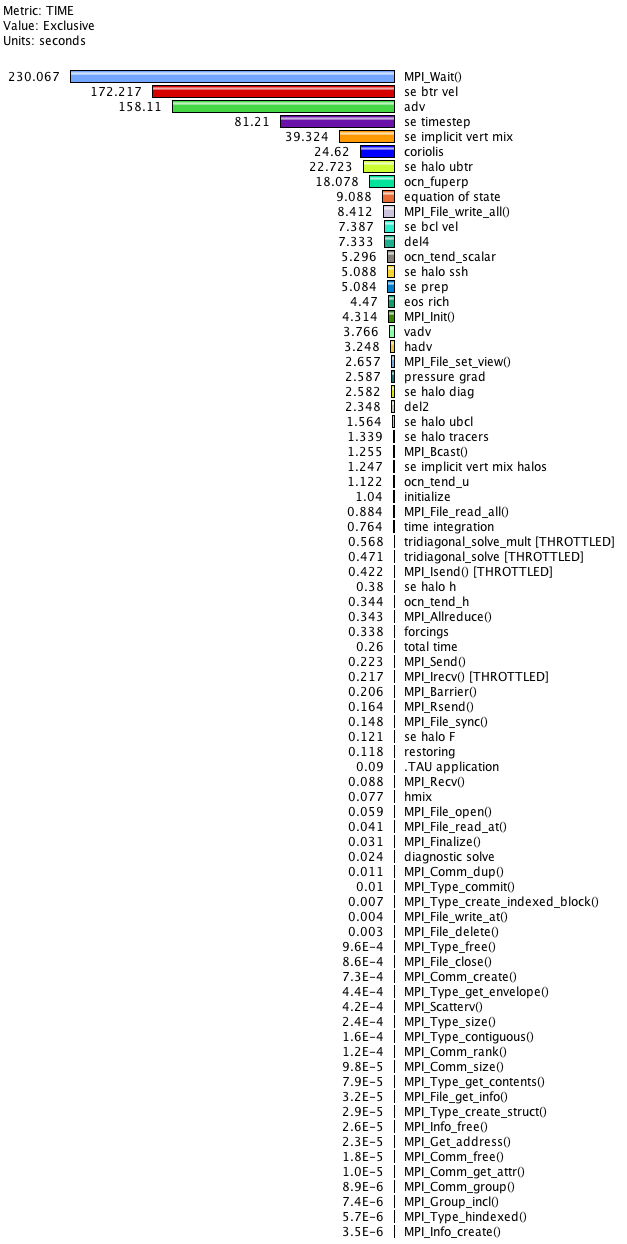

The functions in the profile view are, from left to right (also visible in the mean profile, below):

- MPI_Wait()

- se btr vel

- adv

- se timestep

- se implicit vert mix

- coriolis

- se halo ubtr

- ocn_fuperp

Clearly, MPI_Wait() is dominating, the receiver pair to the asynchronous MPI_Isend() calls.

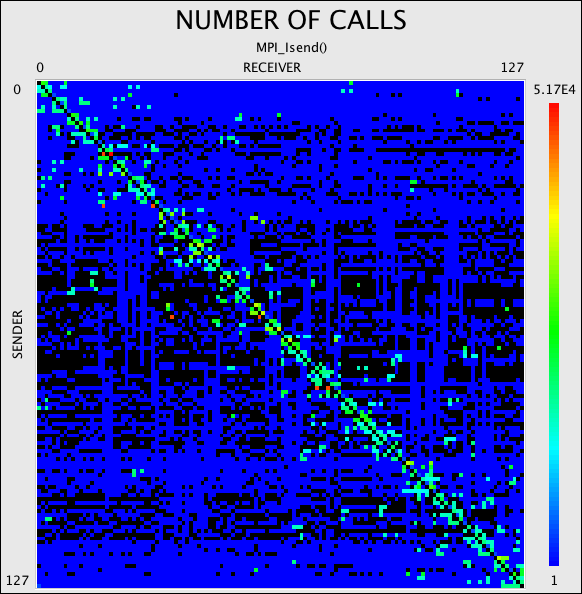

The communication matrix looks fairly diagonal, but it appears that nearly all nodes perform asynchronous communication with almost all other nodes at some point.

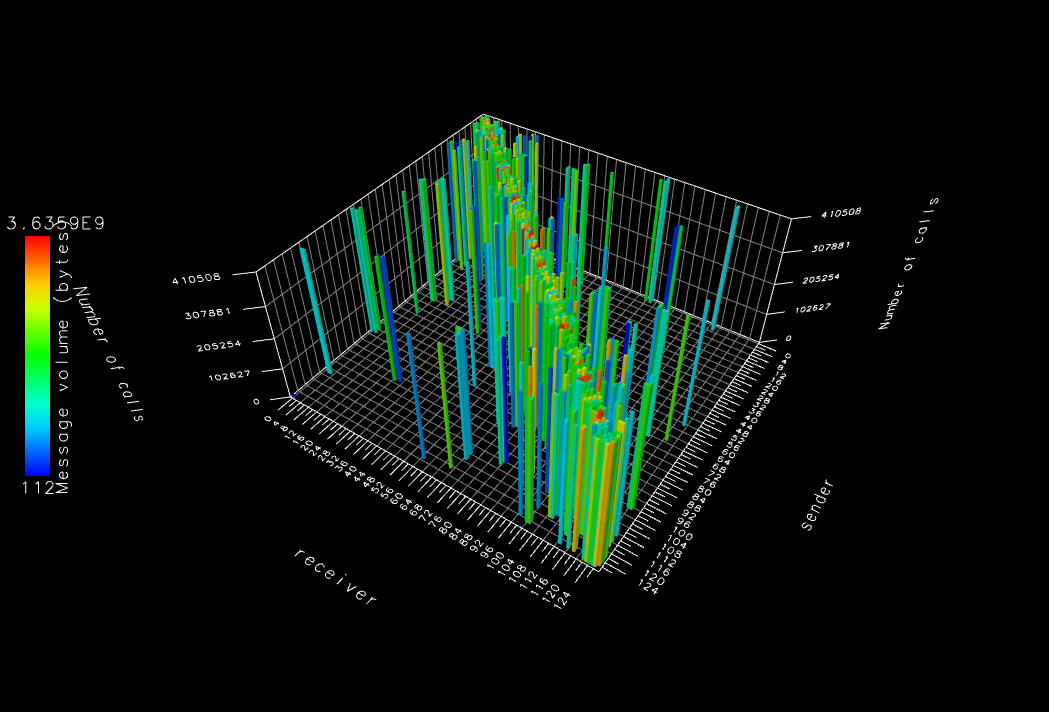

The 3D view of this same data shows the number of calls to MPI_Isend() (the bar heights) and the total data sent (the bar colors).

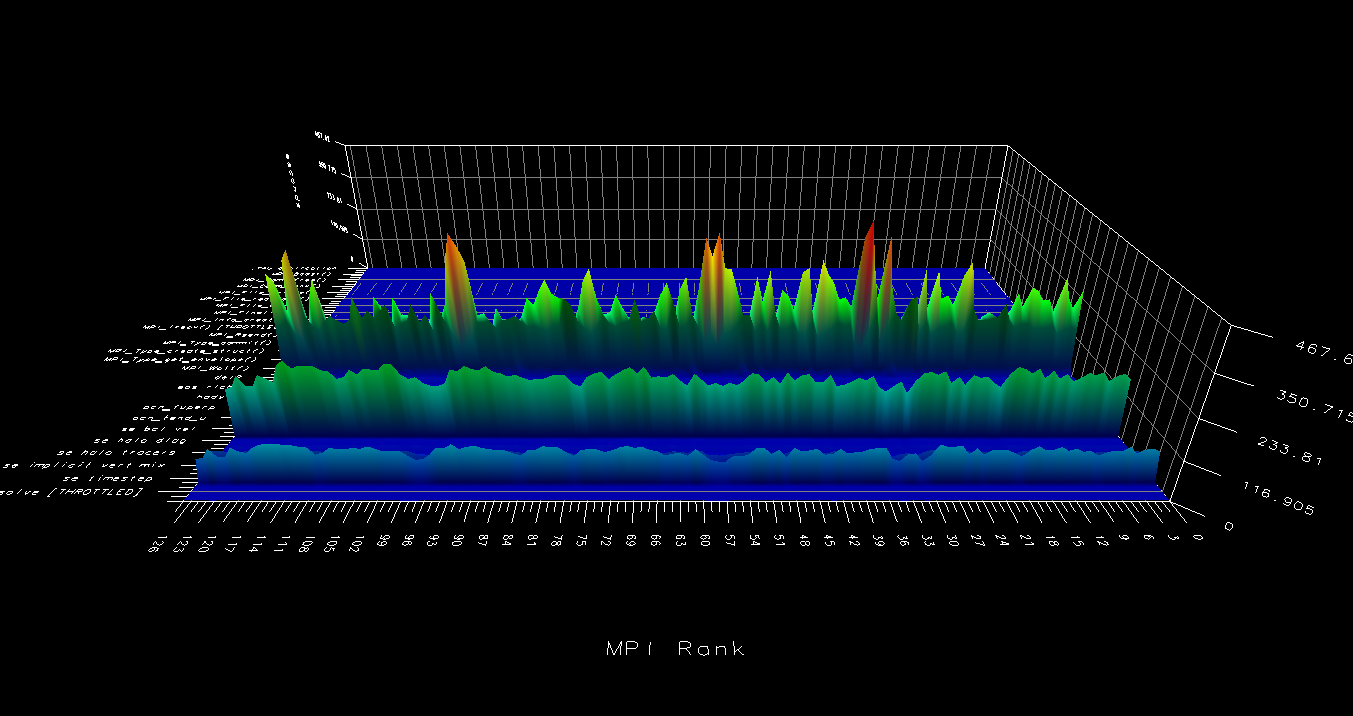

This view shows the profile in 3D. Clearly, spikes in MPI_Wait() times are correlated with valleys in the "se btr vel" and "adv" timers.