Difference between revisions of "Uintah/AMR"

| Line 19: | Line 19: | ||

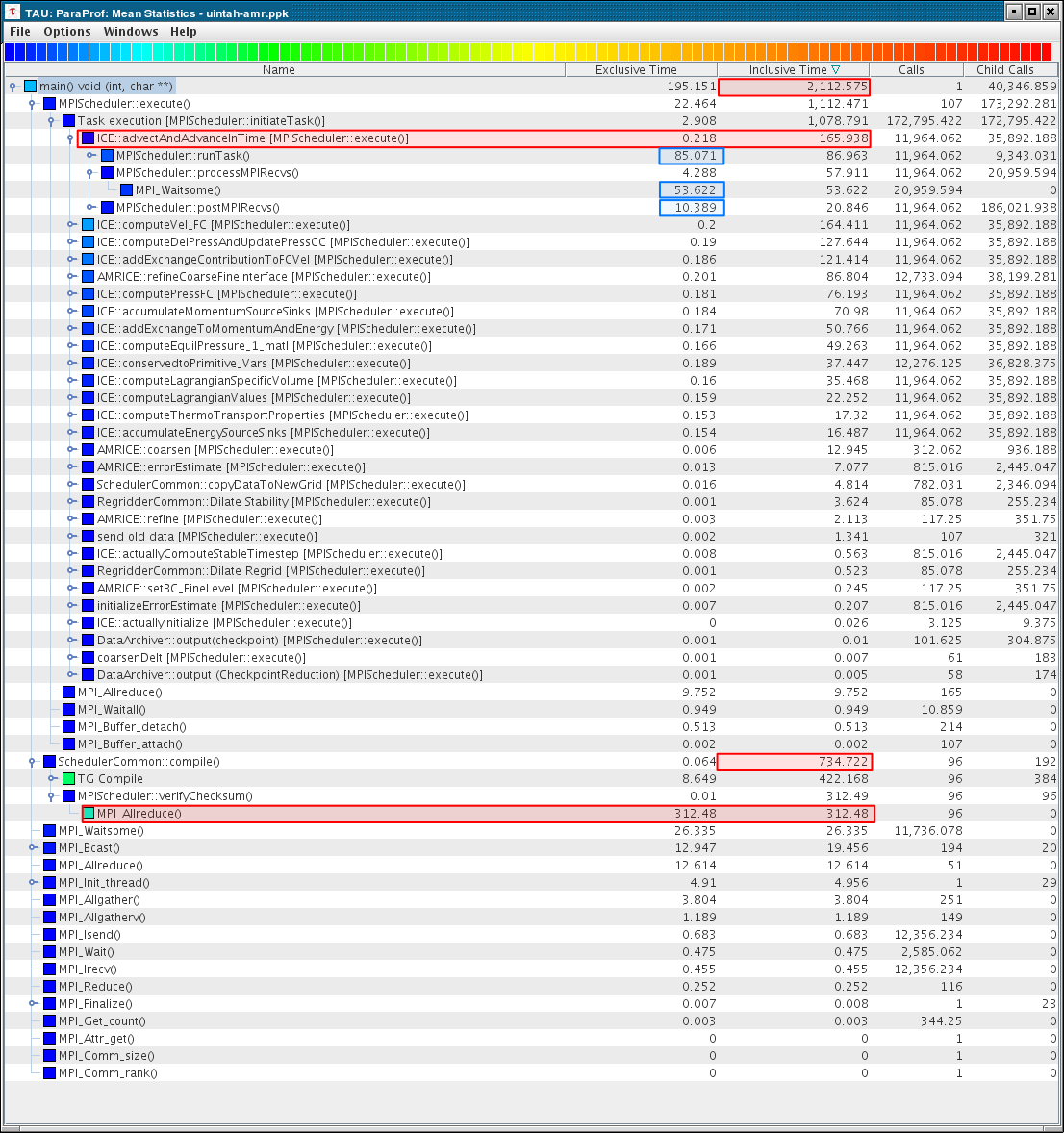

Highlighted below in the mean profile callpath view are several key numbers. First the <font color=red><tt>2112.575</tt></font> second overall runtime. | Highlighted below in the mean profile callpath view are several key numbers. First the <font color=red><tt>2112.575</tt></font> second overall runtime. | ||

| − | Next, we see that <font color=red><tt>165.938</tt></font> seconds are spent in the task <tt>ICE::advectAndAdvanceInTime</tt>. | + | Next, we see that <font color=red><tt>165.938</tt></font> seconds are spent in the task <font color=green><tt>ICE::advectAndAdvanceInTime</tt></font>. |

| − | Of that <font color=red><tt>165.938</tt></font> seconds, <font color=blue><tt>85.071</tt></font> are spent in computation (<tt>runTask()</tt>), and <font color=blue><tt>53.622</tt></font> seconds are spent in <tt>MPI_Waitsome()</tt> | + | Of that <font color=red><tt>165.938</tt></font> seconds, <font color=blue><tt>85.071</tt></font> are spent in computation (<tt>runTask()</tt>), and <font color=blue><tt>53.622</tt></font> seconds are spent in <tt>MPI_Waitsome()</tt>. |

| + | |||

| + | Also notable, <font color=red><tt>734.722</tt></font> seconds (1/3rd of the execution) is spent in the <tt>MPI_Allreduce()</tt> step of the task graph compilation. The nodes perform a simple checksum to check that they all have the same graph. My guess is that the <tt>MPI_Allreduce()</tt> is simply acting as a synchronization point at the start of an iteration and that the large amount of time here is due to an imbalance in the work load (some nodes reach it much earlier than others). | ||

[[Image:UintahAMR-callpath.png]] | [[Image:UintahAMR-callpath.png]] | ||

Revision as of 01:02, 26 March 2007

Basic Information

| Machine | Inferno (128 node 2.6 GHz Xeon cluster) |

| Input File | hotBlob_AMRb.ups |

| Run size | 64 CPUs |

| Run time | about 35 minutes (2100 seconds) |

| Date | March 2007 |

Callpath Results

TAU was configured with:

-mpiinc=/usr/local/lam-mpi/include/ -mpilib=/usr/local/lam-mpi/lib -PROFILECALLPATH -useropt=-O3

Highlighted below in the mean profile callpath view are several key numbers. First the 2112.575 second overall runtime. Next, we see that 165.938 seconds are spent in the task ICE::advectAndAdvanceInTime.

Of that 165.938 seconds, 85.071 are spent in computation (runTask()), and 53.622 seconds are spent in MPI_Waitsome().

Also notable, 734.722 seconds (1/3rd of the execution) is spent in the MPI_Allreduce() step of the task graph compilation. The nodes perform a simple checksum to check that they all have the same graph. My guess is that the MPI_Allreduce() is simply acting as a synchronization point at the start of an iteration and that the large amount of time here is due to an imbalance in the work load (some nodes reach it much earlier than others).